OpenGradient

Every AI application today relies on a single point of trust. When an AI agent manages a portfolio, approves a loan, or moderates content, there is no way to independently verify what model ran, what prompt was used, or whether the output was tampered with. Users are asked to trust the operator - and the operator alone.

OpenGradient changes this. It is a decentralized network purpose-built for AI inference, where every computation can be cryptographically verified without trusting any single party. Models run on a permissionless network of specialized nodes, proofs are settled on-chain, and the entire pipeline - from request to response - is auditable.

The Problem

AI infrastructure is consolidating into a handful of providers. This creates real issues:

Opacity: When an LLM makes a decision that affects money, health, or governance, there is no way to prove what happened inside the black box. You cannot verify which model version ran, what system prompt was injected, or whether the response was filtered or modified before reaching you.

Single points of failure: If the provider goes down, rate-limits you, or changes their model behavior, your application breaks. There is no fallback and no recourse.

Trust without verification: Agent operators or APIs can silently swap models, inject content, or log prompts. Users must accept this on faith. For applications where correctness matters - financial agents, medical reasoning, audit trails - faith is not enough.

How OpenGradient Works

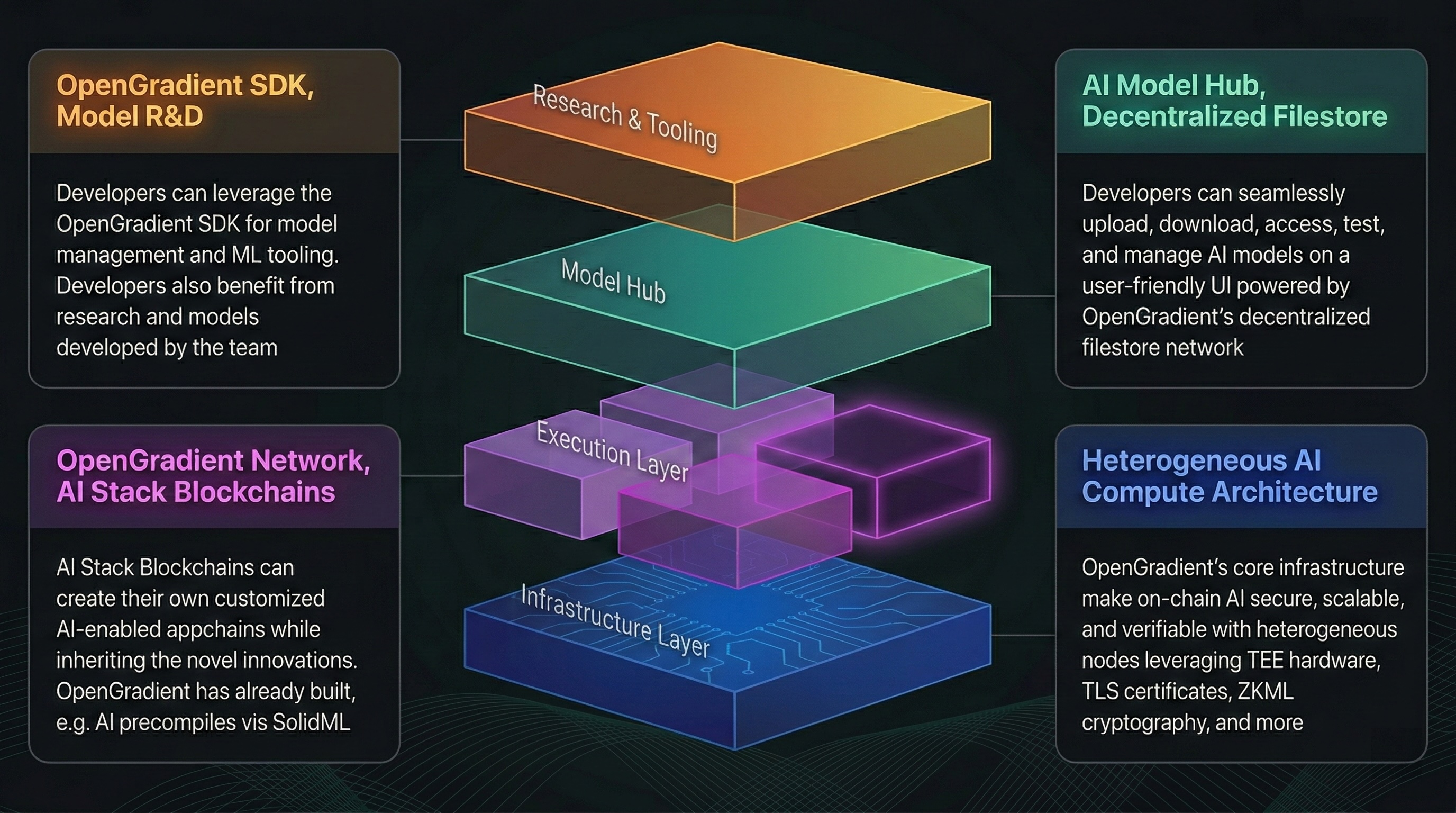

OpenGradient is not a wrapper around existing AI APIs. It is a vertically-integrated infrastructure stack, from a purpose-built blockchain to specialized compute nodes, designed around one principle: AI inference should be verifiable by default.

The core insight is that AI workloads have fundamentally different requirements than financial transactions. A model inference takes seconds, not milliseconds. It requires GPUs, not CPUs. The data involved is large and unstructured. Conventional blockchain designs - where every validator re-executes every computation - simply do not work.

OpenGradient solves this with a Hybrid AI Compute Architecture (HACA) that separates execution from verification. Inference requests go directly to specialized compute nodes and return with web2-like latency. Proofs and attestations are then settled asynchronously on a dedicated verification layer. The result: you get the performance of centralized infrastructure with the trust guarantees of a decentralized network.

For a technical deep dive into HACA and how node specialization works, see the Architecture section.

What Makes It Different

Node Specialization

Instead of a monolithic validator set where every node does everything, OpenGradient uses specialized node types that each handle what they are good at:

- Full Nodes run consensus, manage the ledger, verify proofs, and handle payment settlement - they never touch GPUs or run models.

- Inference Nodes are stateless GPU workers that execute models. They come in two flavors: LLM Proxy Nodes that route requests to providers like OpenAI and Anthropic through TEE enclaves, and Local Inference Nodes that run open-source models directly on hardware.

- Data Nodes operate in secure enclaves to provide trusted access to external data - price feeds, APIs, databases - with attestations that prove the data was not tampered with.

- Decentralized Storage on Walrus keeps model files and large proofs off-chain, referenced by blob IDs recorded on the ledger.

This division of labor means each node type can be independently scaled, optimized, and secured for its specific workload. It is also what enables OpenGradient to offer sub-second latency while maintaining full verifiability - something that is architecturally impossible in a design where every validator must re-execute every inference.

Verification Without Re-Execution

Verifying AI inference does not require re-running it. OpenGradient supports multiple verification methods, each suited to different workloads:

| Method | How It Works | Trade-off |

|---|---|---|

| TEE | Inference runs inside a Trusted Execution Environment. Hardware attestations prove the enclave ran approved code and was not tampered with. | Negligible overhead, strong guarantees. Used for LLM inference. |

| ZKML | A zero-knowledge proof is generated alongside the inference, proving the correct model produced the correct output for a given input. | High overhead (1000-10000x), cryptographic certainty. Used for high-stakes ML models. |

| Vanilla | Signature verification only, no cryptographic proof of execution. | Zero overhead, no verification guarantees. Suitable for low-risk workloads. |

Developers choose the verification level that matches their risk profile. A chatbot might use TEE. A DeFi liquidation model might use ZKML. A content recommendation might use Vanilla. This is a conscious design choice - not every inference needs the same level of trust, and forcing uniform verification on all workloads is wasteful.

Asynchronous Settlement

Inference and verification happen on separate timelines. When you make a request:

- It goes directly to an inference node - no blockchain in the critical path.

- The result comes back immediately with web2-like latency.

- After the response is returned, the proof is submitted, validated by full nodes during consensus, and permanently recorded on the ledger.

This separation is what makes OpenGradient practical for real applications. You do not wait for block confirmation to get an LLM response. But every response is eventually settled, verified, and auditable.

Products

OpenGradient's infrastructure is exposed through purpose-built products:

$OPG tokens.Model HubDecentralized model repository on Walrus. Upload any model - from logistic regression to stable diffusion - and it becomes instantly available for verified inference across the network.MemSyncLong-term memory layer for AI applications. Automatically extract and classify memories from conversations, build user profiles, and maintain context across sessions - all backed by verifiable LLM inference.Python SDKThe primary interface for building on OpenGradient. LLM inference, model management, and Model Hub integration via pip install opengradient.What You Can Build

AI Agents with Provable Reasoning: Build autonomous agents where every LLM call is cryptographically signed with the exact prompt used. When your agent moves money, approves transactions, or makes decisions, anyone can verify the reasoning chain on-chain - not just trust that it happened correctly.

Verifiable LLM Services: Access GPT-4, Claude, Grok, Gemini and more through a unified API where inference is TEE-verified. Useful for any application where you need to prove to a third party what the AI actually said - audit trails, compliance, dispute resolution.

Privacy-Preserving Applications: TEE nodes process prompts inside hardware enclaves. The node operator cannot see, log, or manipulate your requests. This makes OpenGradient suitable for sensitive workloads - medical reasoning, financial analysis, private conversations - without trusting the infrastructure provider.

Personalized AI with Persistent Memory: Use MemSync to build AI applications that remember users across sessions. Memory extraction, classification, and profile generation all run on OpenGradient's verified infrastructure, so the memory pipeline itself is auditable.

Decentralized Model Hosting: Upload models to the Model Hub for permissionless access. Any model stored on Walrus is available for inference on the network - no gatekeepers, no approval process, no vendor lock-in.

Coming Soon

On-chain ML execution via PIPE is under development on our alpha testnet:

- Smart Contract Integration: Call AI models natively from Solidity via precompiles. Build DeFi protocols with dynamic fee models, ML-based risk scoring, or any on-chain logic that needs model inference.

- Atomic AI Transactions: Model inference executes atomically within transactions - the result is part of the state transition, not an external oracle call.

- Composable AI Workflows: Chain multiple models together with mixed verification methods. Use ZKML for the risk model and TEE for the LLM reasoning, all in a single transaction.

TIP

You can find the OpenGradient network explorer at https://explorer.opengradient.ai.